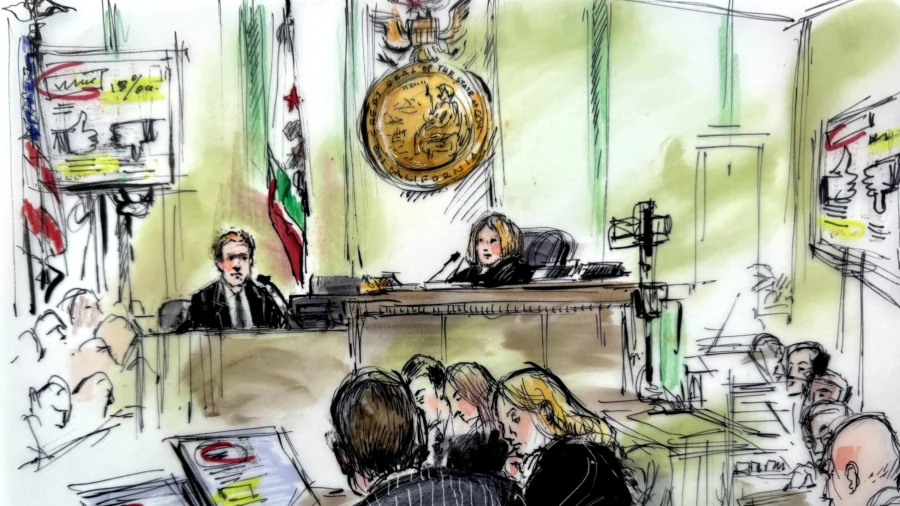

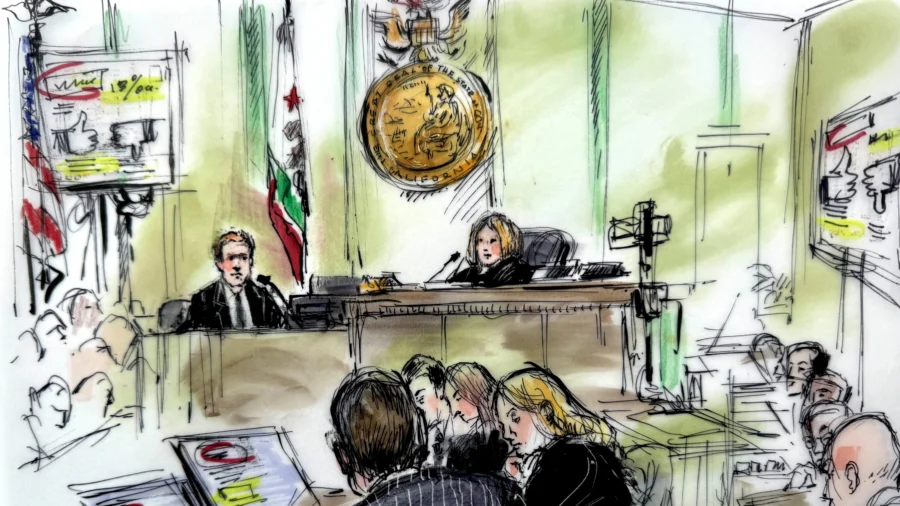

LOS ANGELES—After four weeks, attorneys representing the plaintiff in a landmark jury trial in Los Angeles Superior Court—considering whether two of the world’s largest social media companies may be held liable for alleged harms to children resulting from “addictive” design features—rested their case Friday.

Kaley claims she became addicted to social media as a child and has suffered serious psychological harms as a result, including body dysmorphia, depression, anxiety, and suicidal ideation.

Her lawsuit, which names Instagram (parent company Meta) and YouTube (parent company Google) as defendants, is one of a handful of bellwether trials expected to have profound bearing on thousands of related, consolidated civil injury suits brought by parents, children, school districts, and district attorneys.

TikTok (parent company ByteDance) and Snapchat (parent company Snap Inc.) were co-defendants in the lawsuit but settled privately shortly before trial; the two companies remain named in related cases.

Instead, plaintiff’s attorneys on Friday read a section from Karen’s 2025 deposition.

Asked if she considered it her job to supervise her daughter’s social media use, Karen said, “I just wouldn’t think it would be an issue. I haven’t really had the experience before … I only recently learned how to text.”

She said she didn’t know Kaley was on social media apps, and didn’t understand the accessibility and potential exposures.

“If you’re asking me now about the damage social media does, I would have never given her a phone,” she said. “I didn’t have any idea about the damage and what is out there … what it could do to children.”

As their last witness, plaintiff’s attorneys called Brook Istook, an expert in social media safety, who told the jury that both defendants hide and downplay risks to children and obstruct parental supervision.

“Parents don’t know and [risks] are not disclosed in ways parents can understand. I’ve reviewed transparency reports and information companies put out, but they’re not being transparent about the types and frequencies of risk their children are going to encounter,” Istook said.

The witness said her review of Meta’s internal documents revealed the company’s leadership knew of addiction issues, and of their relation to manipulative or “dark pattern” designs used to build platform features.

Social media companies are protected by both the First Amendment and Section 230 of the 1996 Communications Act from liability claims related to third-party content posted on their sites.

Kaley’s case focuses narrowly on the function and design—features like notifications, “infinite scroll,” and the defendant’s proprietary algorithms—which her lawyers claim are driving an unprecedented mental health crisis among her generation.

While both companies have added progressive safety features and parental controls in recent years, Istook said such are essentially “Band-Aids” that don’t solve the problem of addictive or unsafe design that is engineered to “capture and keep a child’s attention.”

The company knew as far back as 2015 about the fact that teens were using fake Instagram accounts or “finstas” to evade parental supervision, and in fact established a project to grow the number of such accounts, by showing teens how they could create them and switch between accounts in the app, she reported.

The defense on Friday also presented their first two witnesses, in the form of videotaped depositions.

In them, a vice principal and school counselor at Kaley’s high school recounted disciplinary incidents and claims she had made about difficulties with her home life, but did not recall social media ever being an issue.

Their recollection of her contrasted with an image presented by plaintiff’s witnesses of a shy, insecure adolescent paralyzed by social anxiety—Kaley was diagnosed with social phobia in middle school.

“She was a very likable young lady,” the school counselor said.

The trial is expected to conclude by March 20.